Cypress to Playwright Migration

Test Engineer III • Playwright • TypeScript • 600+ End-to-End Tests

Case study describing the redesign and migration of a large-scale end-to-end test automation system used to validate a complex web application with more than 600 automated regression scenarios.

I took ownership of re-architecting the automation framework and migrating the test suite to

Playwright, eliminating flaky interdependent tests and enabling safe parallel execution, reducing regression runtime from 2.5+ hours to under 40 minutes.

Rather than treating the effort as a simple framework replacement, the migration was approached as a systems redesign of the automation platform itself. The goal was to create an architecture that produced fast, deterministic feedback for engineers while remaining maintainable and scalable as the product and test coverage continued to grow.

Project Snapshot

- Role: Test Engineer III

- Scope: Drove redesign and migration of the end-to-end automation framework

- Framework: Playwright with TypeScript

- Scale: 600+ end-to-end tests

Key Outcomes

- 600+ end-to-end tests migrated to the new framework

- Regression runtime reduced from 2.5+ hours to under 40 minutes

- Parallel execution safely enabled through deterministic test isolation

- Flaky failures significantly reduced through stable selectors and deterministic synchronization

- Framework redesigned for maintainability and long-term scalability

- Automation restored as a reliable release signal for development and CI workflows

The Challenge

The legacy end-to-end suite had grown to roughly 600 tests, but its architecture made it slow, fragile, and difficult to maintain. Regression runs took more than 2.5 hours, and failures were common enough that engineers could not rely on the suite to gate releases.

The problems were not limited to tooling. The suite had accumulated interdependent tests, arbitrary wait times, inconsistent setup requirements, and weak visibility into true test coverage. As a result, failures frequently reflected automation instability rather than real product defects.

Slow execution

Full regression took more than 2.5 hours, making it impractical for engineers to run locally or wait on complete results before shipping changes.

Interdependent tests

Many tests depended on data created by earlier tests, leading to cascading failures and making root-cause analysis much harder.

Arbitrary waits

The suite frequently used fixed delays instead of waiting for deterministic UI states, which increased runtime and introduced timing-related flakiness.

Environment inconsistency

Tests might pass locally when manually prepared, but the overall system was too slow and brittle for dependable local execution.

Migration Goals

The goal of the migration was not simply to replace Cypress with Playwright. The larger objective was to rebuild the automation system so that it could provide fast, trustworthy feedback and scale with the product over time.

- Reduce regression runtime significantly

- Eliminate cross-test dependencies

- Replace arbitrary waits with deterministic synchronization

- Enable safe parallel execution

- Create a framework architecture that could scale as the application evolved

- Restore developer confidence in automation as a release-quality signal

Architecture Principles

- Test isolation by default: each scenario should establish or reference the exact state it needs without depending on prior execution.

- Selectors owned by abstractions: locator logic should live in page objects and component models rather than in test files.

- Shared configuration as a source of truth: routes, environment values, and framework defaults should be centralized rather than hardcoded across the suite.

- Authenticated setup should be reusable: global setup should establish the session once so both UI tests and API helpers can reuse the same authenticated state.

- UI validation, API provisioning: API helpers should provision deterministic prerequisite state while UI automation remains focused on user-facing behavior.

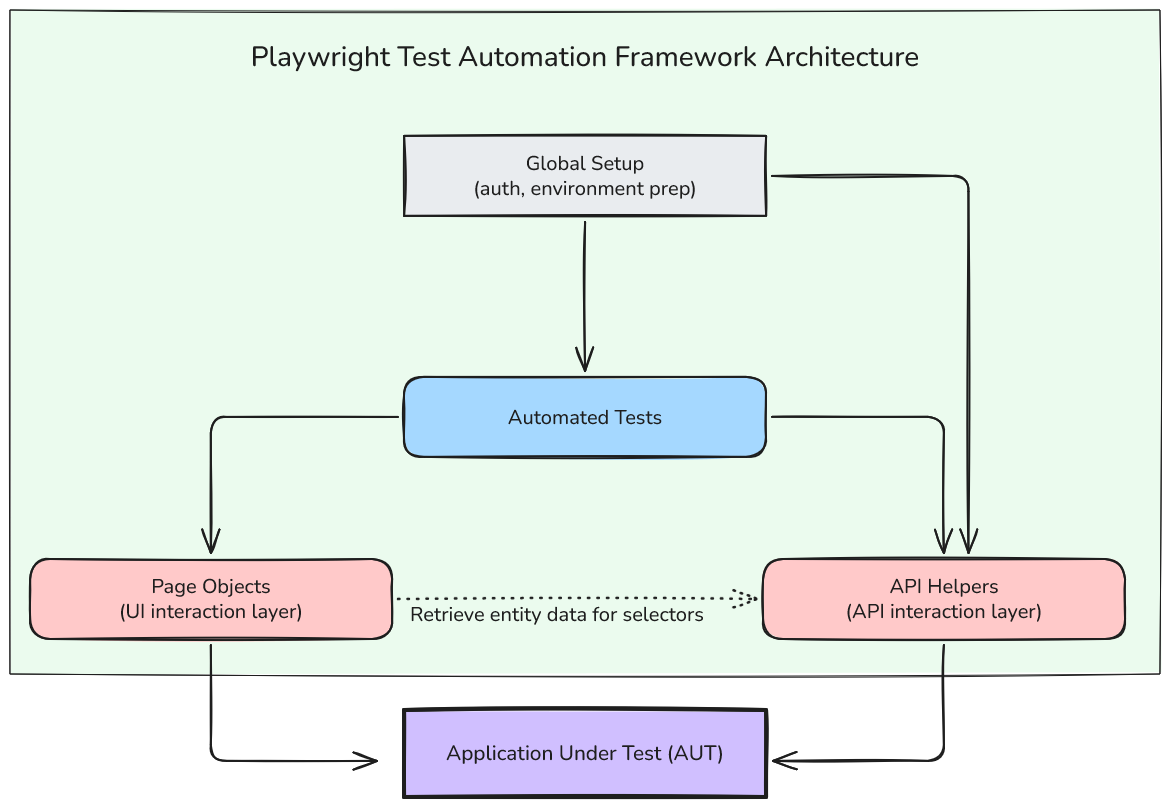

Framework Architecture and Test Orchestration

I implemented the new automation framework in Playwright with TypeScript, designing it around modularity, deterministic behavior, and maintainability. The architecture intentionally separated concerns between the test layer, the UI interaction layer, and the API and setup layer so that tests remained readable while shared behavior stayed centralized.

Rather than treating the migration as a simple tool swap, the framework was rebuilt so it could support isolated, parallelizable tests and continue scaling as the product grew.

Architecture of the Playwright test automation framework showing automated tests orchestrating UI interactions through page objects and backend operations through API helpers. Global setup prepares shared environment configuration, while both interaction layers communicate with the application under test.

Environment Configuration

I made test execution configurable through dotenv

, allowing environments, worker counts, and other runtime settings to be managed without hardcoding them into the framework. This allows the same suite to run consistently across local development, CI, and environment-specific workflows.

Standardizing configuration also reduces onboarding friction for other engineers and makes execution behavior more predictable. Instead of maintaining ad hoc setup knowledge, the framework could be run using shared, explicit configuration conventions.

Configuration is kept declarative so important execution behavior such as retries, worker count, base URL, and tracing can be managed centrally rather than scattered across the suite.

Example Playwright Configuration

import 'dotenv/config'

import { defineConfig } from '@playwright/test'

import { ENV, PLAYWRIGHT_DEFAULTS } from 'config/constants'

export default defineConfig({

timeout: PLAYWRIGHT_DEFAULTS.timeout,

retries: PLAYWRIGHT_DEFAULTS.retries,

workers: Number(process.env.PW_WORKERS || PLAYWRIGHT_DEFAULTS.workers),

reporter: 'html',

use: {

baseURL: ENV.baseUrl,

trace: 'on-first-retry'

}

})That makes the suite easier to run across environments and helps ensure local execution and CI execution behave in a more consistent and understandable way.

Shared Types

To keep the framework consistent across layers, I centralized entity interfaces in a shared types.ts file. AAPI helpers, page objects, component models, and tests all work from the same domain contracts, which improves readability and reduces ambiguity in setup and assertions.

Example Shared Types

export interface AssetEntity {

Id: string

Name: string

AssetType: string

}This makes it easier to flow real domain entities through the framework instead of relying on ad hoc objects or raw selector strings scattered across the test layer.

Constants and Shared Configuration

Shared framework configuration is centralized in config/constants.ts so that

routes, environment configuration, and Playwright execution defaults can be managed in

a single location.

Static routes such as the assets page are defined directly, while dynamic routes are generated using helper functions that accept typed domain entities. This avoids fragile string concatenation and ensures navigation logic stays consistent across the framework.

Centralizing configuration also simplifies environment management. Test execution defaults such as timeouts and worker counts can be defined once and reused by the Playwright configuration and other framework components.

Example constants.ts

import type { AssetEntity } from 'config/types'

export const ENV = {

baseUrl: process.env.BASE_URL ?? 'http://localhost:3000'

} as const

export const ROUTES = {

assets: '/assets'

} as const

export const routeFor = {

assetDetails: (asset: AssetEntity) => `${ROUTES.assets}/${asset.Id}`

} as const

export const PLAYWRIGHT_DEFAULTS = {

timeout: 30000,

retries: 2,

workers: 4

} as constShared Utilities

Additional helper utilities support the broader automation ecosystem beyond browser interactions alone. These tools help manage environment state, test infrastructure, and supporting systems that the suite depended on.

- PowerShell helpers for executing commands on test devices

- VM management helpers for restarting machines or restoring snapshots

- Reusable resource managers for deterministic environment setup

These utilities are important because reliable end-to-end automation often depends on infrastructure readiness just as much as test logic.

Test Organization

Tests are organized by feature area so the suite can scale in a predictable way as coverage expands. This makes navigation easier for contributors and keeps related workflows grouped together.

Example Test Organization

playwright/

config/

constants.ts

types.ts

pageObjects/

AppPage.ts

AssetsPage.ts

AssetCardModel.ts

AssetDetailsPanel.ts

api/

AppApi.ts

AssetApi.ts

tests/

assets.spec.ts

utilities/

powershell.ts

vmManager.ts

resourceManager.ts

playwright.config.ts

...other Playwright files

Each automated test also includes the associated Xray test case key to maintain traceability between automated coverage and test management records.

Base Classes and Inheritance

I introduced shared base classes across both the UI and API layers to standardize repeated behavior such as navigation, common element access, request handling, and assertion patterns. This is a deliberate move away from scattered utility logic and toward a more consistent framework contract.

Page Objects and Component Models

The UI layer follows the Page Object Model pattern originally described by Martin Fowler, encapsulating UI interactions within page objects so that tests remain focused on behavior rather than selector details. Page objects represent complete screens or workflows and own navigation, high-level actions, and shared assertions. Component models represent smaller reusable parts of the interface such as cards, rows, panels, or grouped lists that can appear within those pages.

- Page objects handled navigation and top-level workflows

- Component models handle reusable UI structures within a page

- Tests interact through these abstractions instead of owning selectors directly

This separation reduces duplication, keeps tests focused on behavior rather than implementation details, and makes the framework much easier to extend. New coverage can be added by composing existing page objects and component models instead of redefining selectors and workflows repeatedly.

Page Objects

Page objects own navigation and high-level actions. When a test needs to interact with a specific row, card, or panel, the page object creates or returns the appropriate component model. This separation keeps tests readable and prevents UI details from leaking into the test layer.

In the UI layer, the base page object encapsulates shared browser interactions and common page elements so that feature-specific page objects only needed to implement the logic unique to their area of the application.

The base page class provides shared navigation behavior and common page elements, while feature-specific page objects such as AssetsPage extend that base behavior for a particular screen. Component models like AssetCardModel and AssetDetailsPanel represent reusable UI elements within that page, keeping selector logic centralized and test workflows easy to read.

Example Base Page Class

import { Locator, Page, Response } from '@playwright/test'

export abstract class AppPage {

protected page: Page

protected abstract pageUrl: string

public headingTitle: Locator

public headingSubtitle: Locator

constructor(page: Page) {

this.page = page

this.headingTitle = this.page.getByTestId('page_title')

this.headingSubtitle = this.page.getByTestId('page_subtitle')

}

public async navigateTo(): Promise<Response | null> {

const response = await this.page.goto(this.pageUrl)

await this.page.waitForLoadState('domcontentloaded')

return response

}

public async reload(): Promise<void> {

await this.page.reload()

await this.page.waitForLoadState('domcontentloaded')

}

}Example Page Object

import { expect, type Locator, type Page } from '@playwright/test'

import { ROUTES } from 'config/constants'

import type { AssetEntity } from 'config/types'

import { AppPage } from 'pageObjects/AppPage'

import { AssetCardModel } from 'pageObjects/Assets/AssetCardModel'

import { AssetDetailsPanel } from 'pageObjects/Assets/AssetDetailsPanel'

export class AssetsPage extends AppPage {

protected override pageUrl = ROUTES.assets

public container: Locator

constructor(page: Page) {

super(page)

this.container = this.page.getByTestId('assets_page')

}

public async expectVisible(): Promise<void> {

await expect(this.container).toBeVisible()

}

public getAssetCard(asset: AssetEntity): AssetCardModel {

return new AssetCardModel(asset, this.page)

}

public getAssetDetailsPanel(): AssetDetailsPanel {

return new AssetDetailsPanel(this.page)

}

}Component Models

Smaller UI elements are represented as component models. These are typically created by a page object and returned to the test when needed. This allows tests to work with typed domain entities instead of owning raw selectors directly.

Example Component Model

import type { Locator, Page } from '@playwright/test'

import type { AssetEntity } from 'config/types'

export class AssetCardModel {

public asset: AssetEntity

private page: Page

public locator: Locator

constructor(asset: AssetEntity, page: Page) {

this.asset = asset

this.page = page

this.locator = this.page.getByTestId(`asset-${this.asset.Id}`)

}

public async open(): Promise<void> {

await this.locator.click()

}

}Example Details Panel

import { expect, type Locator, type Page } from '@playwright/test'

export class AssetDetailsPanel {

private page: Page

public container: Locator

public assetName: Locator

constructor(page: Page) {

this.page = page

this.container = this.page.getByTestId('asset_details_panel')

this.assetName = this.page.getByTestId('asset_name')

}

public async expectVisible(): Promise<void> {

await expect(this.container).toBeVisible()

}

public async expectAssetName(name: string): Promise<void> {

await expect(this.assetName).toHaveText(name)

}

}The result is a cleaner separation of concerns: tests describe intent, page objects manage workflows, and component models handle specific reusable UI elements.

Using inheritance this way reduces duplicated code, improves consistency across page objects, and makes the framework easier for other engineers to contribute to without reinventing common patterns in each file.

API Layer

The API layer is designed as more than a set of CRUD wrappers. It encapsulates domain-specific setup logic, resource orchestration, and infrastructure-aware workflows so tests can create valid state quickly and deterministically.

This is especially important because many UI workflows require complex prerequisite data. Building all of that state through the UI would have made the suite slower, more brittle, and harder to debug. By shifting setup into API helpers, tests can start from known-good conditions and reserve UI automation for validating user-facing behavior.

Authentication is handled during Playwright global setup using the

application's login flow. The authenticated session is stored using

Playwright storageState, allowing both browser tests and API

helpers to reuse the same session without repeating the login step.

The shared AppApi base class reads the authentication cookie

from that state and applies it as a Bearer token for authorized API requests.

Example Base API Class

import type { APIRequestContext } from '@playwright/test'

export abstract class AppApi {

protected context: APIRequestContext

protected headers: Record<string, string>

constructor(context: APIRequestContext) {

this.context = context

this.headers = {

'Content-Type': 'application/json'

}

}

protected async getAuthHeaders(): Promise<Record<string, string>> {

const state = await this.context.storageState()

const authCookie = state.cookies.find(

cookie => cookie.name === 'authToken'

)

if (!authCookie) {

throw new Error('Authentication cookie not found in storage state')

}

return {

...this.headers,

Authorization: `Bearer ${authCookie.value}`

}

}

}

API helpers extend the shared AppApi base class so that

authentication, headers, and request configuration are centralized in

one place. Individual helpers such as AssetApi focus only

on domain-specific operations, allowing tests to create and clean up

application state without duplicating request logic or authentication

handling.

Example Asset API Helper

import { expect, type APIRequestContext } from '@playwright/test'

import { AppApi } from 'api/AppApi'

import type { AssetEntity } from 'config/types'

export class AssetApi extends AppApi {

private endpoints = {

assets: '/api/assets'

}

constructor(context: APIRequestContext) {

super(context)

}

async createAsset(payload: Partial<AssetEntity>): Promise<AssetEntity> {

const headers = await this.getAuthHeaders()

const response = await this.context.post(this.endpoints.assets, {

headers,

data: payload

})

expect(response.status()).toBe(200)

return await response.json()

}

async deleteAsset(assetId: string): Promise<void> {

const headers = await this.getAuthHeaders()

const response = await this.context.delete(

`${this.endpoints.assets}/${assetId}`,

{ headers }

)

expect(response.status()).toBe(200)

}

}This kind of abstraction removes repetitive setup from the test layer and allows the framework to use the fastest and most reliable mechanism for establishing state, while keeping UI automation focused on the behavior users actually experience.

Selector Strategy Improvements

One of the main causes of instability in the legacy suite was brittle selector usage. Tests often depended on DOM

structure, styling hooks, or implementation details that changed frequently. To address that, I worked with the engineering team to instrument the application with standardized data-test-id attributes designed specifically for automation.

These identifiers follow shared naming conventions so selectors remain predictable even when dealing with dynamic entities, grouped content, or indexed UI structures. The important architectural rule is that selector ownership belongs to the page object or component model layer, not to the test itself.

That means tests interact through descriptive methods and typed entities while the UI abstraction layer owns the locator details. This reduces maintenance overhead and prevented locator changes from rippling through large portions of the test suite.

With the framework standardized around typed entities and stable naming conventions, the UI can expose durable automation hooks tied to meaningful domain data rather than fragile positional selectors.

Application components expose stable

automation hooks using data-test-id attributes tied to domain

entities. The Playwright framework consumes these identifiers through page objects and component models rather than exposing selectors directly to tests.

The following example comes from the application codebase.

Example React Component

import type { AssetEntity } from 'config/types'

export function AssetCard({ asset }: { asset: AssetEntity }) {

return (

<div data-test-id={`asset-${asset.Id}`}>

<h3>{asset.Name}</h3>

<span>{asset.AssetType}</span>

</div>

)

}In practice, that produces selectors that were both human-readable and resilient to layout changes.

Example Rendered HTML

<div data-test-id="asset-12345">Test Isolation

A major focus of the migration was eliminating test dependencies. In the legacy suite, tests often relied on data created by earlier tests, which meant one failure could trigger a cascade of unrelated failures. That made the suite noisy, hard to diagnose, and fundamentally unsafe to parallelize.

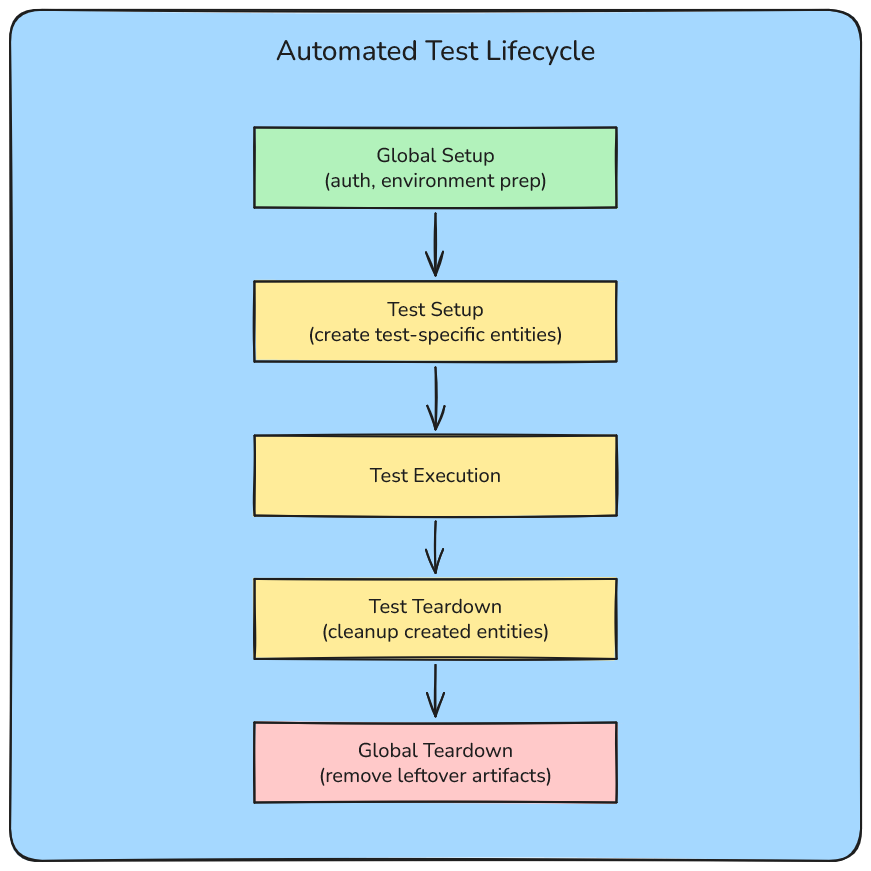

In the new framework, each test establishes the exact state it required and cleaned up after execution. Shared environment prerequisites were handled globally, but application-specific entities were created and removed as part of the test lifecycle.

- Each test creates its own entities

- Tests clean up resources after execution

- Global setup configures required environment state

- Global teardown removes leftover test artifacts

All test-created entities follow strict naming conventions so automated cleanup logic can safely identify and remove them without affecting data created manually by developers or other environments. This is an important operational safeguard, not just a coding convenience.

Lifecycle of an automated test within the framework. Global setup prepares shared environment configuration, while each test manages its own setup and teardown to create and remove required entities. This prevents state leakage between test runs.

UI Workflow Tests

In practice, the test layer combines typed API-created state with reusable page objects and component models. The test itself remains focused on the user workflow rather than on setup plumbing or selector details.

Example UI Workflow Test

import { test } from '@playwright/test'

import { AssetsPage } from 'pageObjects/Assets/AssetsPage'

import type { AssetEntity } from 'config/types'

test('user can open asset details from an asset card', async ({ page }) => {

const asset: AssetEntity = {

Id: '12345',

Name: 'Lobby Display',

AssetType: 'Widget'

}

const assetsPage = new AssetsPage(page)

await test.step('open the assets page', async () => {

await assetsPage.navigateTo()

await assetsPage.expectVisible()

})

await test.step('open the asset card', async () => {

const assetCard = assetsPage.getAssetCard(asset)

await assetCard.open()

})

await test.step('verify asset details are visible', async () => {

const detailsPanel = assetsPage.getAssetDetailsPanel()

await detailsPanel.expectVisible()

await detailsPanel.expectAssetName(asset.Name)

})

})This keeps the test layer concise and readable while still taking advantage of the framework abstractions built underneath it.

Test Setup and Teardown

Tests are structured around deterministic setup, explicit execution steps, and clear teardown logic. API helpers create the required entities, the test exercised the UI workflow under controlled conditions, and cleanup removed both application data and supporting resources.

This approach ensures the scenario could be run repeatedly without depending on pre-existing state, which was essential for both reliability and parallel execution.

Example Test Setup and Lifecycle

import 'dotenv/config'

import { faker } from '@faker-js/faker'

import { test } from '@playwright/test'

import { AssetApi } from '../support/api/AssetApi'

import { AssetsPage } from '../pageObjects/AssetsPage'

import { AssetDetailsPanel } from '../pageObjects/AssetDetailsPanel'

import type { AssetEntity } from '../config/types'

let assetApi: AssetApi

let asset: AssetEntity

test.beforeAll(async ({ request }) => {

assetApi = new AssetApi(request)

asset = await assetApi.createAsset({

Name: `asset-${faker.string.alphanumeric(6)}`,

AssetType: 'Widget'

} as Partial<AssetEntity>)

})

test('user can view asset details', async ({ page }) => {

const assetsPage = new AssetsPage(page)

const assetDetailsPanel = new AssetDetailsPanel(page)

await test.step('open the assets page', async () => {

await assetsPage.navigateTo()

await assetsPage.expectVisible()

})

await test.step('select an asset card', async () => {

const assetCard = assetsPage.getAssetCard(asset)

await assetCard.open()

await assetsPage.openSelectedAssetDetails()

})

await test.step('verify asset details are visible', async () => {

await assetDetailsPanel.expectVisible()

await assetDetailsPanel.expectAssetName(asset.Name)

})

})

test.afterAll(async () => {

await assetApi.deleteAsset(asset.Id)

})Designing tests this way makes failures more actionable. When a scenario breaks, it was much more likely to indicate a real product issue or a legitimate automation regression rather than contaminated state from another test.

Results

The migration produced both technical and organizational impact. By redesigning the automation architecture around deterministic setup and test isolation, the suite became significantly faster, more reliable, and trustworthy enough to function as a real engineering feedback signal rather than a source of noise.

- Regression runtime reduced from 2.5+ hours to under 40 minutes, enabling full-suite execution in CI and practical local runs for engineers

- Parallel execution safely enabled through test isolation and removal of cross-test dependencies

- Flaky failures dramatically reduced through stable selectors, deterministic synchronization, and isolated test state

- Automation restored as a reliable release signal, allowing teams to trust regression results during development and release validation

- Framework architecture designed for long-term scalability, making it easier to add new coverage without increasing fragility or maintenance overhead

- 600+ tests migrated into the new architecture without sacrificing coverage

The migration was led as a cross-team initiative, aligning test architecture, engineering workflows, and CI execution so that automation could once again provide fast, reliable feedback across the development lifecycle.

Key Takeaways

The most important lesson from this migration was that automation reliability depends far more on architecture than on the testing tool itself. Playwright provides strong capabilities, but the real impact comes from designing the framework around test isolation, deterministic setup, stable selectors, and reusable abstractions.

Treating the migration as a platform redesign rather than a simple tool replacement allowed the automation system to provide faster feedback, reduce maintenance overhead, and scale alongside the product as new features and tests were added.